Manual

Login

Our 3D CAD supplier models have been moved to 3Dfindit.com, the new visual search engine for 3D CAD, CAE & BIM models.

You can log in there with your existing account of this site.

The content remains free of charge.

Top Links

Manual

-

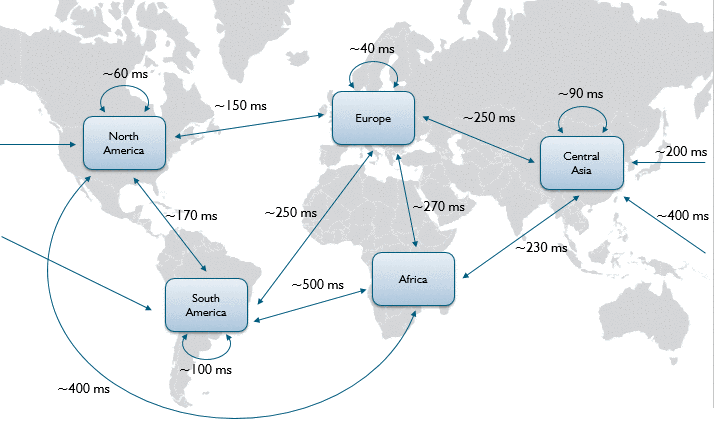

Latency is the time a signal needs for a defined distance. In the following we refer to it as round-trip, that means from A to B and back. This is the time needed for each transaction to start: Client A has to communicate with Server B and gets an answer, then the transaction starts.

For example, latency within Europe is about 40 ms, between Europe and South America about 250 ms. See following figure.

-

Bandwidth defines how much data can be transferred in parallel. E.g. a 10 Mbit line can transfer 1,25 Mbytes per second.

Looking only at PARTsolutions, not all users are searching and selecting parts there at the same time.

For customer own parts, there could be longer download times depending on the bandwidth.

The available bandwidth is shared between all users and all applications at the same location (e.g. Mail, Browser, ERP, PDM, Remote Desktop, Citrix, …).

Conclusion: This means for many small transactions the latency is more important than the bandwidth. But for a few large transactions, the bandwidth is more important than the latency.

The following table shows typical PARTsolutions actions in different latency / bandwidth environments.

![[Note]](/community/externals/manuals/%24%7Bb2b:MANUALPATH/images/note.png) |

Note |

|---|---|

Due to many possible side effects the shown values can only be used as a rough estimation for a customer. | |

In a standard setup the supplier parts, own parts and link database is only accessed through the PARTapplicationServer. Actions like browsing and searching only need a small bandwidth. Viewing a part needs to download the data locally which needs more bandwidth depending on the part complexity.

To compensate a small bandwidth or to save bandwidth it is possible to put a Cache Server between PARTapplicationServer and Client. The Cache Server will be placed at a company site to speed up all their clients.

CADENAS uses Squid as Cache Server, but if a company already has a HTTP Cache Server it can be used.